So, deep learning. Have you heard about it? If you work in the tech sector you probably have. Every week you see news about how people are using it to solve all sorts of interesting challenges around.

Because of all the interest (and sometimes raw hype) around deep learning, you might believe that it's some sort of revolutionary new technology. Well, yes and no: until recently, impediments in backpropagation efficiency and hardware didn't let us use them to their fullest, so there are lots of new practical applications. On the other hand, the concepts and ideas (artificial neural networks) have been around for a while.

Artificial neural networks (ANNs) are a family of computational models based on biological neural networks, like the ones we can find in our brains. These systems can be 'trained' to perform certain computational tasks (like classification) by providing examples and letting them infer rules from them, instead of explicitly programming the rules like in regular software development.

Neural networks are not a new concept, and their popularity has varied a lot through time. Originally, they were intended to build artificial replicas of our brains and other systems with general intelligence. After initial failures (mostly due to the limited computational power of machines of the era), they were abandoned in favor of promising alternatives.

With the advent of powerful hardware, GPUs with thousands of cores and other advances in computation, neural networks provide a powerful tool for creating flexible systems that learn from experience. The exciting field of deep learning is heavily based on neural networks. Therefore, understanding how neural networks function is a pre-requisite if you want to be part of the deep-learning revolution.

In this series, we will learn about neural networks and build them from scratch. We will start with extremely simple examples and work our way through the most important deep learning concepts.

Bio-inspired learning

Artificial neural networks are an example of biologically inspired computing, in this case, the inspiration is drawn from the human brain and its main building block: the humble neuron.

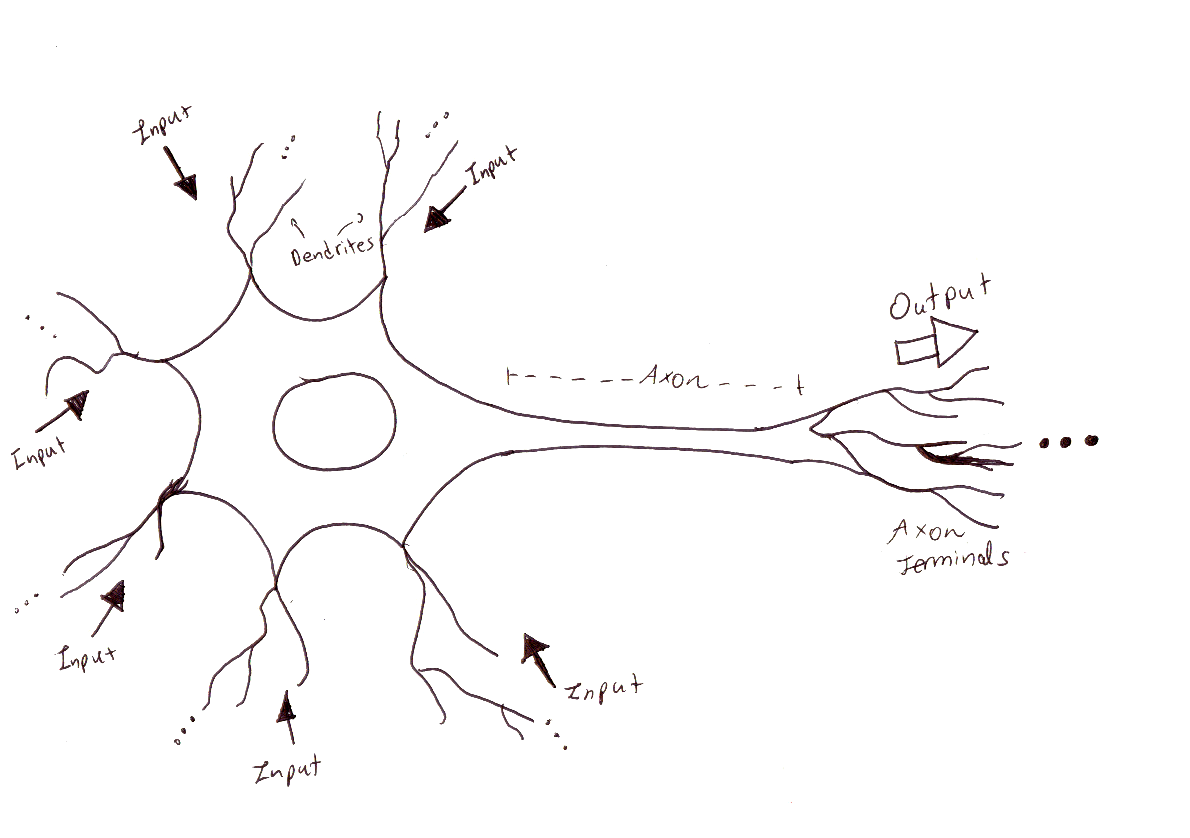

The behavior of a neuron can be modeled in the following way:

Dear biologist/neuroscientist/person who understands neuronal physiology, we know this is not exactly what's going on our neurons. Please understand this is just a simplified model we use to ease our studies and don't call the brain police. Thank you.

This is what's going on:

- Dendrites work as the inputs for the neuron. These are connected to the output terminals of other neurons and receive electrochemical signals.

- When a neuron receives input signals, it doesn't always produce an output. The sum of all signals needs to be strong enough to cross a threshold in order to produce an output.

- A neuron can have multiple inputs, and the sensitivity of each input can be different. This means that individual inputs have greater or smaller contributions towards crossing the threshold. In other words, neurons have weighted inputs.

- If the threshold is crossed, neurons produce an output that travels towards their axon terminals. This output works as the input for other neurons and the process repeats.

Now that we understand this, we can model a neuron as a block that:

- Receives multiple inputs.

- Performs a weighted sum of the inputs, and if they satisfy a condition (threshold), produce outputs.

You can have different types of neurons by altering the number of inputs, number of outputs or the specific condition it needs to produce the output. With that said, let's build a very simple network consisting of only 1 neuron.

Humble Neuronal Beginnings: 1-input, 1-output

Let's take a look at the simplest neural network possible: a network with one input and one output. This network takes the input and multiplies it by a weight value. You can visualize the weight as the factor by which the neural network scales the input.

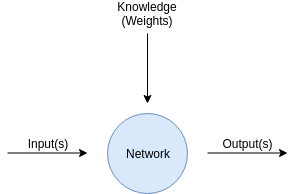

Later we will discuss the learning process of a neural network, for now just remember this: the weights are where the knowledge of the network resides, and the process of learning revolves around tuning the weights until we get good predictions.

A neural network grabs the inputs (the data we feed in) and scales them using the values of the weights (the 'knowledge' of the network). The learning process consists of adjusting those weights so that the inputs produce the right outputs.

Let's build something by scratch: an extremely simple neural network, one of the smallest we can imagine.

Suppose we are trying to create a small NN that lets us estimate how many calories I'll burn (in kcal) after jogging for a given amount of minutes.

If you happen to be an expert in this topic, please excuse my totally invented numbers.

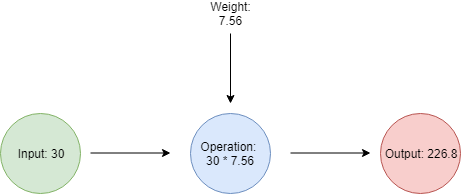

This network has 3 important parts:

- Input: A single input, the number of minutes I spent jogging.

- Weight: A single weight, in this case with the value 7.56

- Output: A single output, the number of calories I will burn after jogging.

The neural network will receive information in the form of an input (in this case, the number of minutes I ran) and knowledge in the form of a single weight with a value of 7.56. It will estimate the output by combining these two pieces of information through a multiplication.

So, suppose today I ran 30 minutes. I feed this number into our network, which multiplies it by the weight of 7.56, and estimates I burned around 226 kcal.

Our neural network is extremely simple, you can easily model it in Python as:

def simple_neural_network(input_information, weight):

return input_information * weight

The following code is a small demo where we show how to estimate how many calories will I burn after running 30 minutes:

def simple_neural_network(input_information, weight):

return input_information * weight

calculated_weight = 7.56

minutes_jogging = 30

calories_burned = simple_neural_network(minutes_jogging, calculated_weight )

print("According to my neural network, after running {} minutes, I burned {} calories".format(minutes_jogging, calories_burned))

Save this code in a file named simple_nn.py and run it using the Python 3 interpreter:

python3 simple_nn.py

According to my neural network, after running 30 minutes, I burned 226.79999999999998 calories

This is the first prediction we made using a neural network, awesome, isn't it?

It might seem a bit too simplistic, but understanding how neural networks estimate outputs based on their inputs and weights is the foundational knowledge a field as big as deep learning is based upon.

Ok, cool, but how did you know the correct weight value is 7.56??

I don't, I invented that number.

Neural networks learn weights from examples. You provide the network with a lot of examples that relate the inputs to the outputs, and through different methods, it estimates weights that perform a valid estimation. In our case, we would need to gather a lot of data pairs that relate the number of minutes a person ran to the number of calories they burned, and the network would find the weights from those examples.

How to find the right weights is the topic of future articles in this series, we will come back to this after dealing with the estimation process. For now, just trust those weight values to be reasonably correct.

To be honest, I didn't come up with that number entirely at random. I searched in google how many calories a person burns after running for varying amounts of time, and estimated that the 7.56 ratio was a good-enough approximation. Of course, there are lots of other factors that influence the number of calories burned: the speed at which the person ran and the overall weight of the person, among others.

In the next article, we will learn how neural networks can take into consideration several inputs for producing better estimates.

What to do next

- Share this article with friends and colleagues. Thank you for helping me reach people who might find this information useful.

- You can find the source code for this series in this repo.

- This article is based on Grokking Deep Learning and on Deep Learning (Goodfellow, Bengio, Courville). These and other very helpful books can be found in the recommended reading list.

- Send me an email with questions, comments or suggestions (it's in the About Me page)